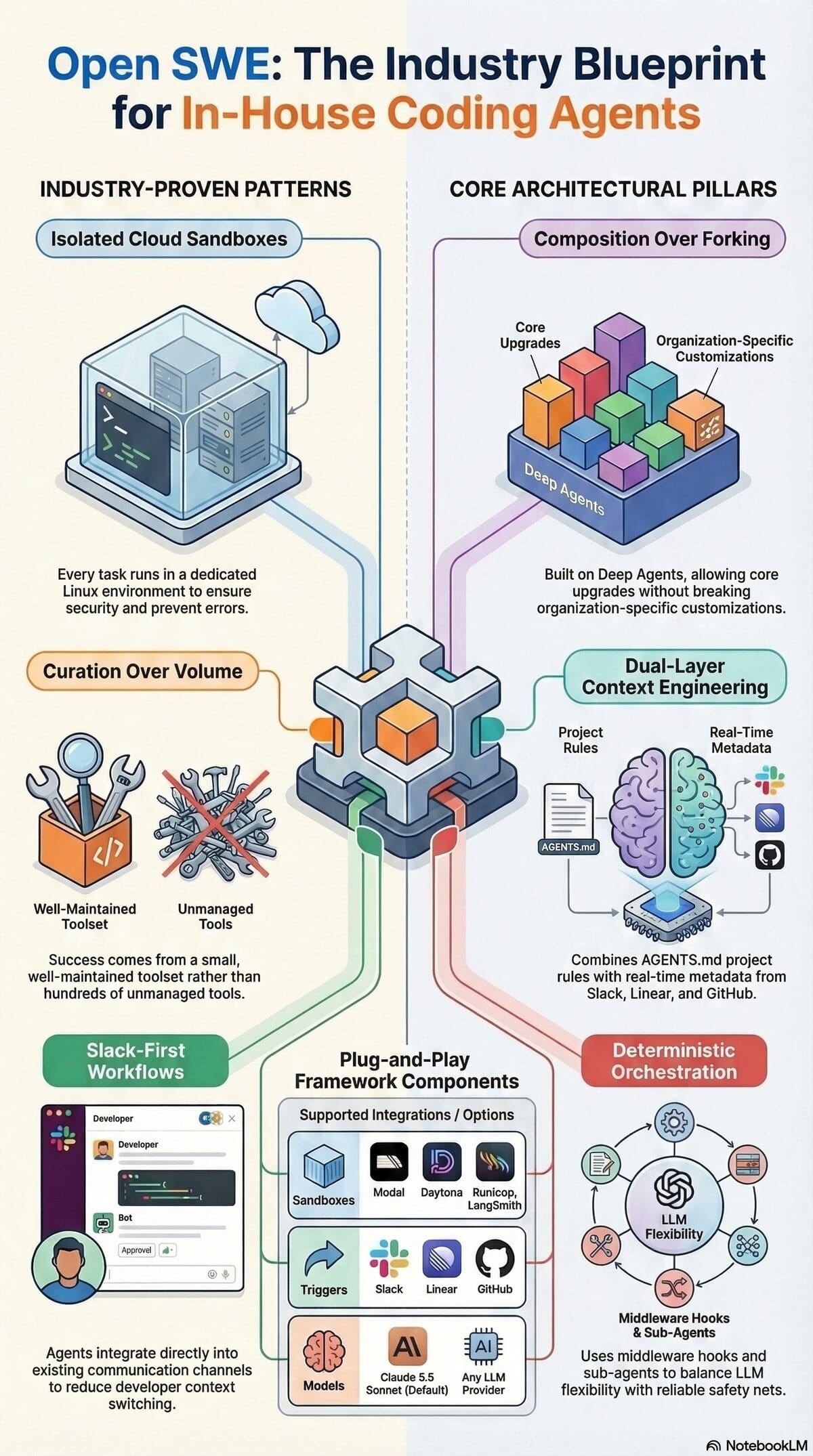

TL;DR: Stripe, Ramp, and Coinbase independently built identical AI coding agent architectures—and now you can too. Open SWE packages these production-tested patterns into an open-source framework. Learn why isolated sandboxes, curated toolsets, and Slack-first interfaces are the new standard for engineering teams.

🎙️ Podcast: Open SWE Framework

📺 Video: Open SWE Overview

📑 Slides: Open SWE Blueprint

📝 Deep Dive: Building Internal Coding Agents: A Deep Dive into the Open SWE Framework

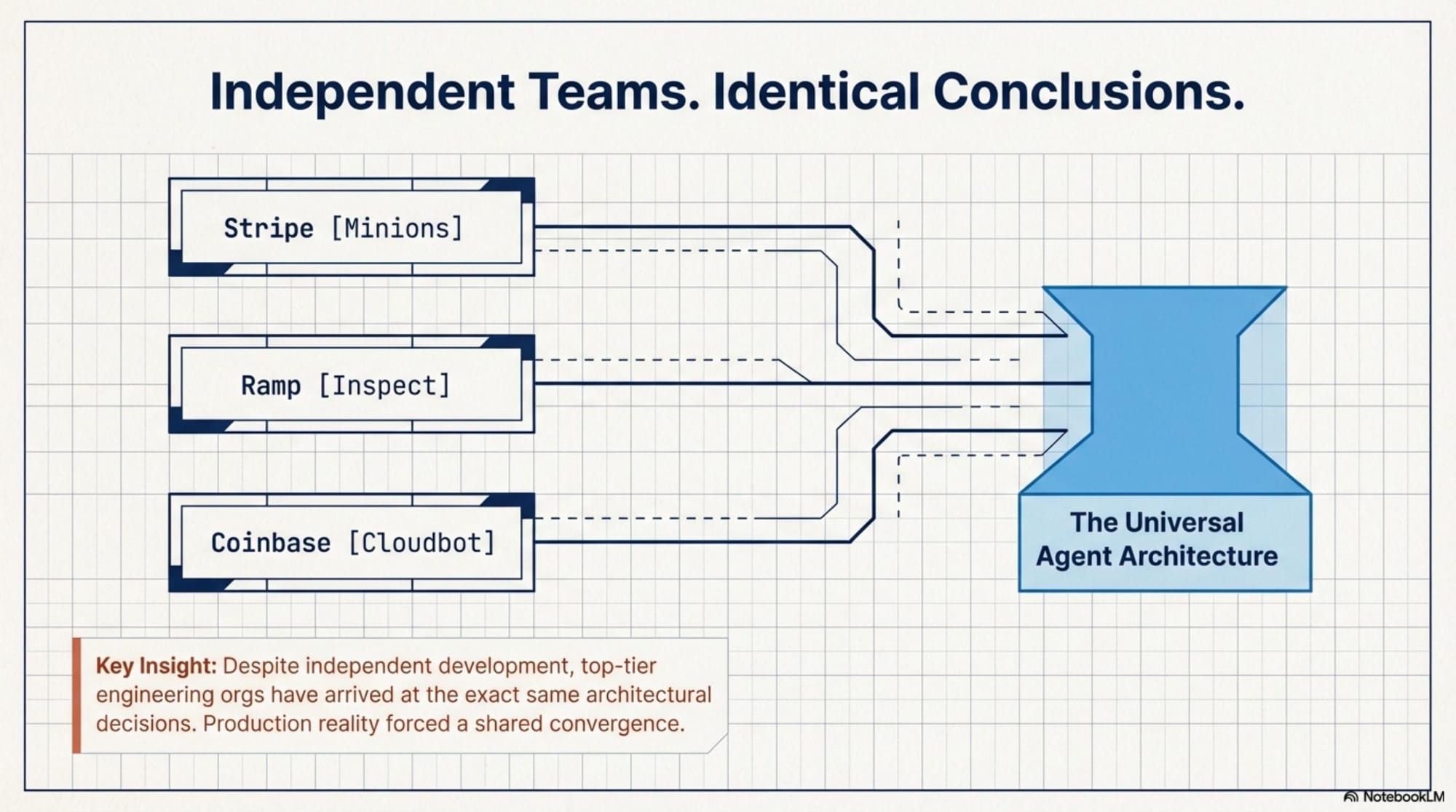

As AI coding assistants evolve, major engineering organizations are moving beyond generic tools and building their own internal coding agents. Interestingly, despite developing these systems independently, industry leaders have converged on remarkably similar architectural decisions.

Enter Open SWE, an open-source framework designed to help teams build their own internal coding agents. Here is a comprehensive look at what Open SWE is, the production patterns that inspired it, its underlying architecture, and how your team can leverage it.

---

What Open SWE is and Why it Matters

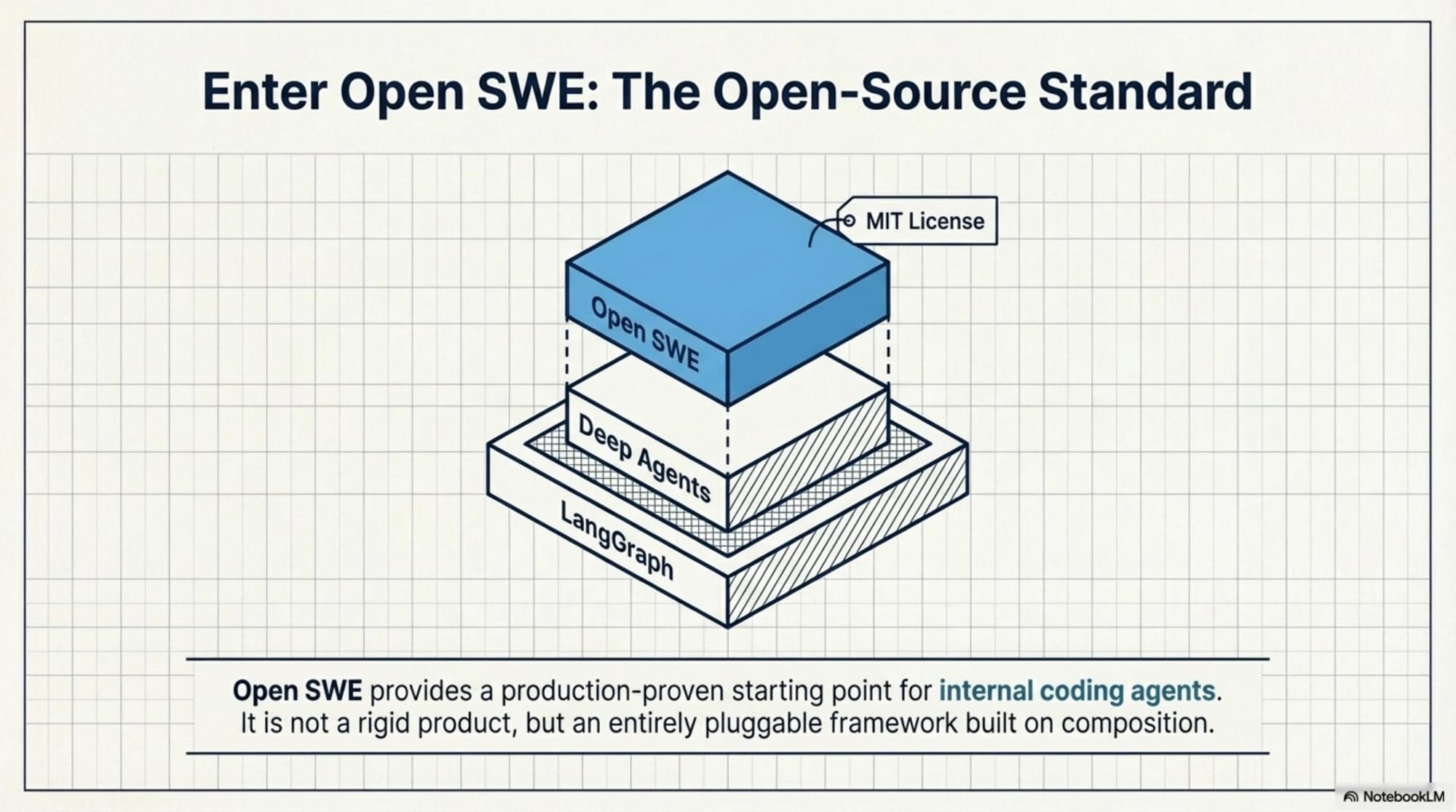

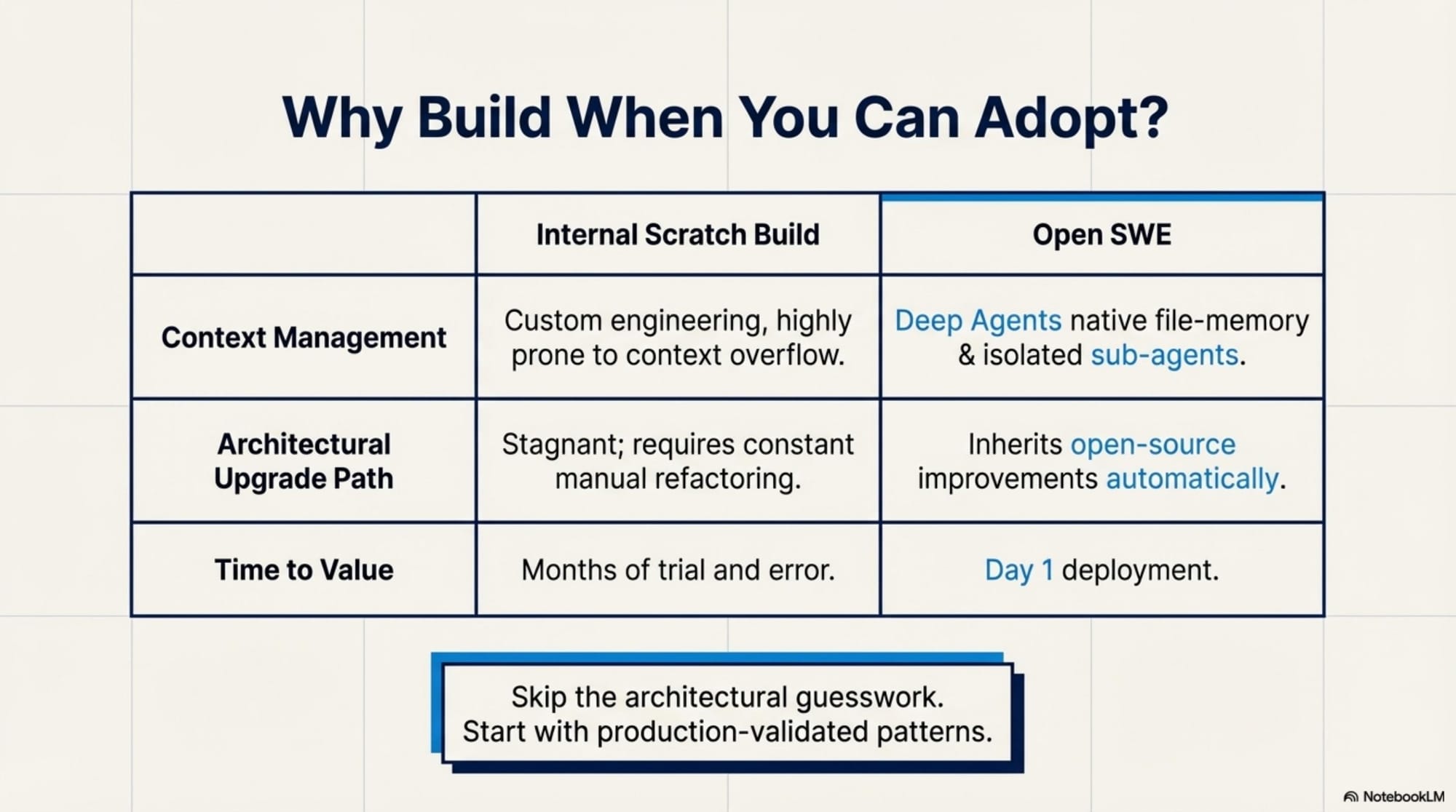

Open SWE is an open-source framework, released under the MIT license, that provides a production-tested starting point for teams looking to adopt internal coding agents.

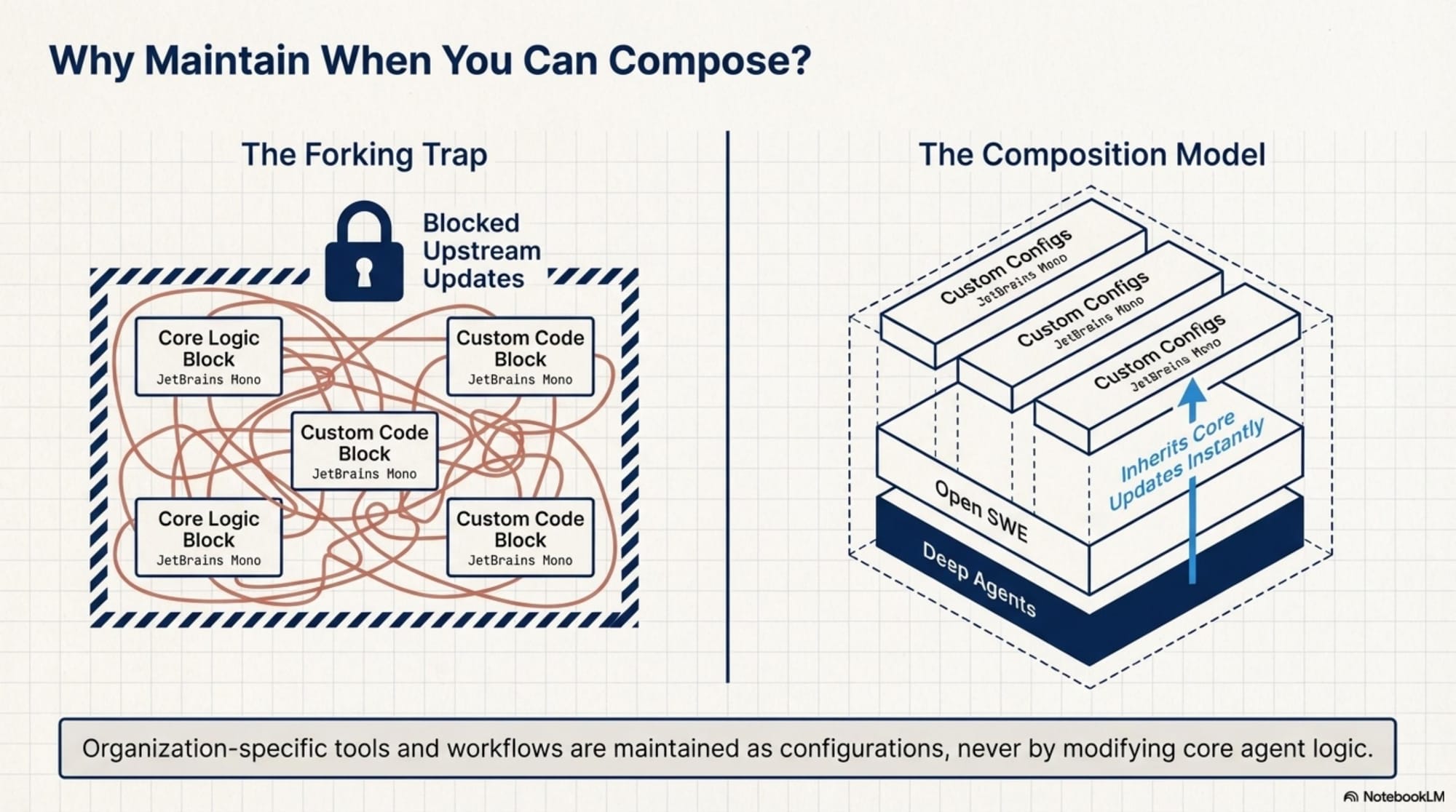

Instead of starting from scratch or attempting to adapt generic AI tools to highly specific internal workflows, Open SWE packages the architectural best practices of top-tier engineering teams into a customizable foundation. It matters because it is built using a composition approach rather than forcing teams to fork existing agents. This means organizations can seamlessly upgrade the underlying framework while preserving their custom tools, workflows, and prompts through simple configuration.

---

Production Patterns from Stripe, Ramp, and Coinbase

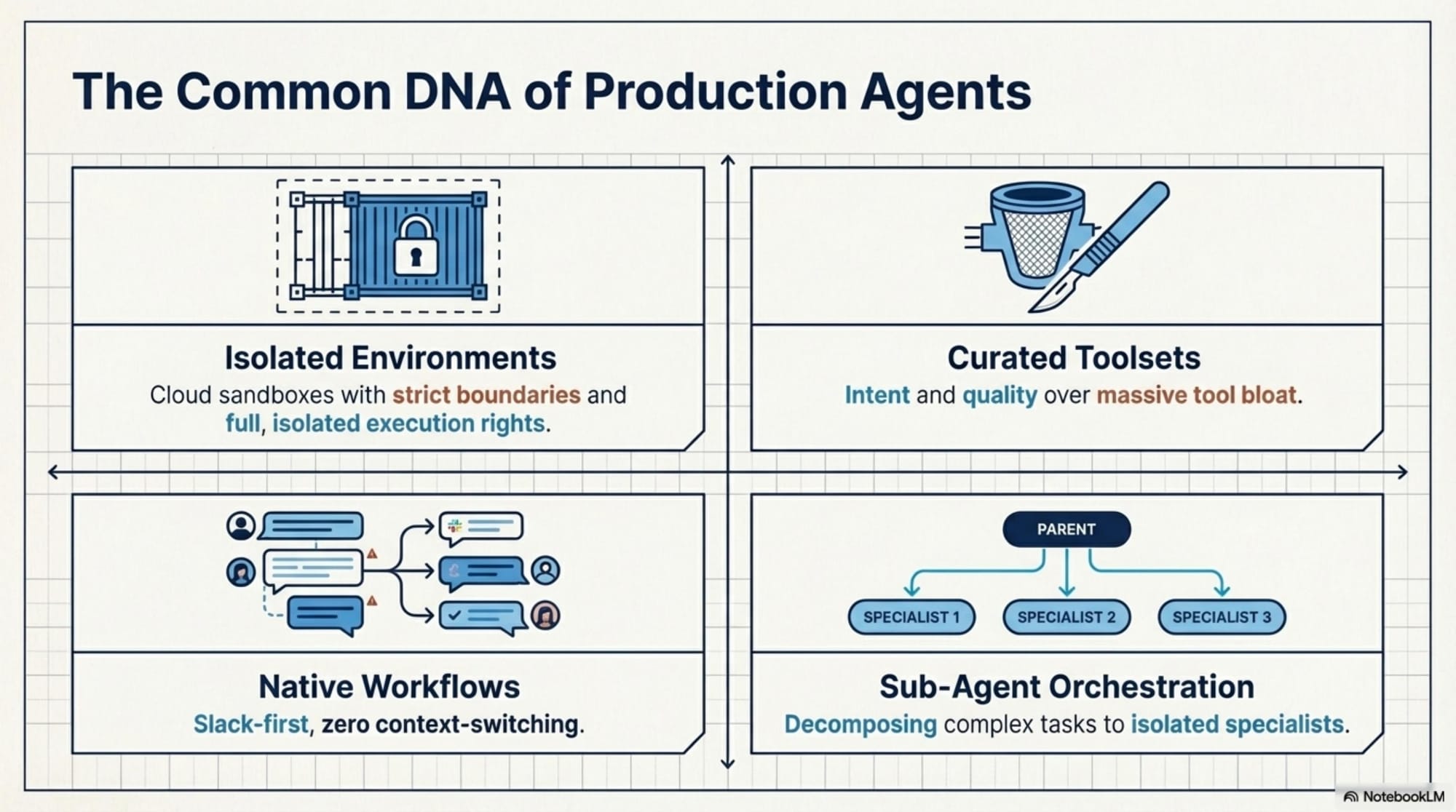

Open SWE's design heavily borrows from the successful internal systems built by industry leaders, specifically Stripe's Minions, Ramp's Inspect, and Coinbase's Cloudbot. These companies independently arrived at several critical design patterns:

Isolated Execution Environments

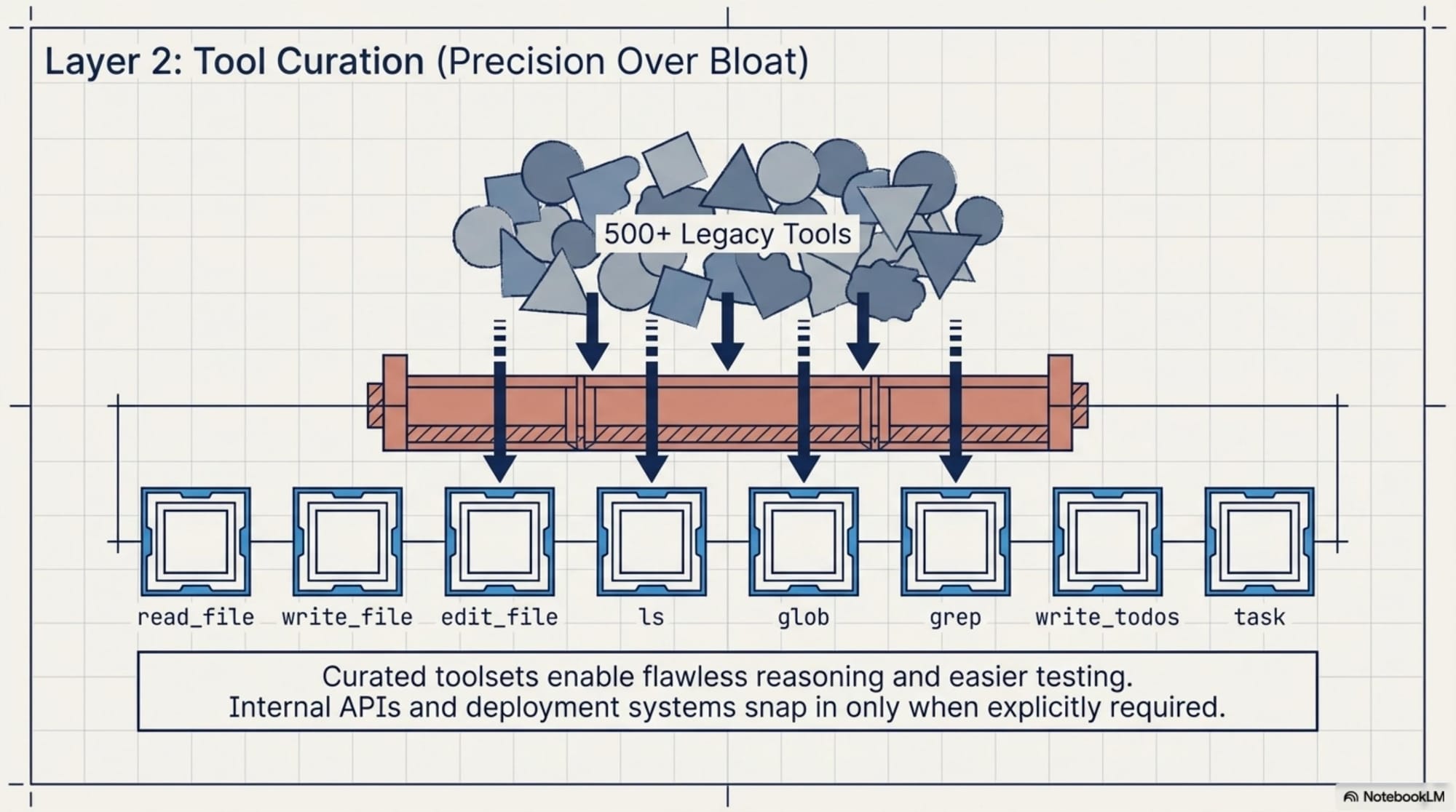

Every task runs in a dedicated cloud sandbox with full shell access within strict boundaries. This isolates the impact of potential mistakes, allowing the agent to execute commands without requiring an approval prompt for every single action.Highly Curated Toolsets

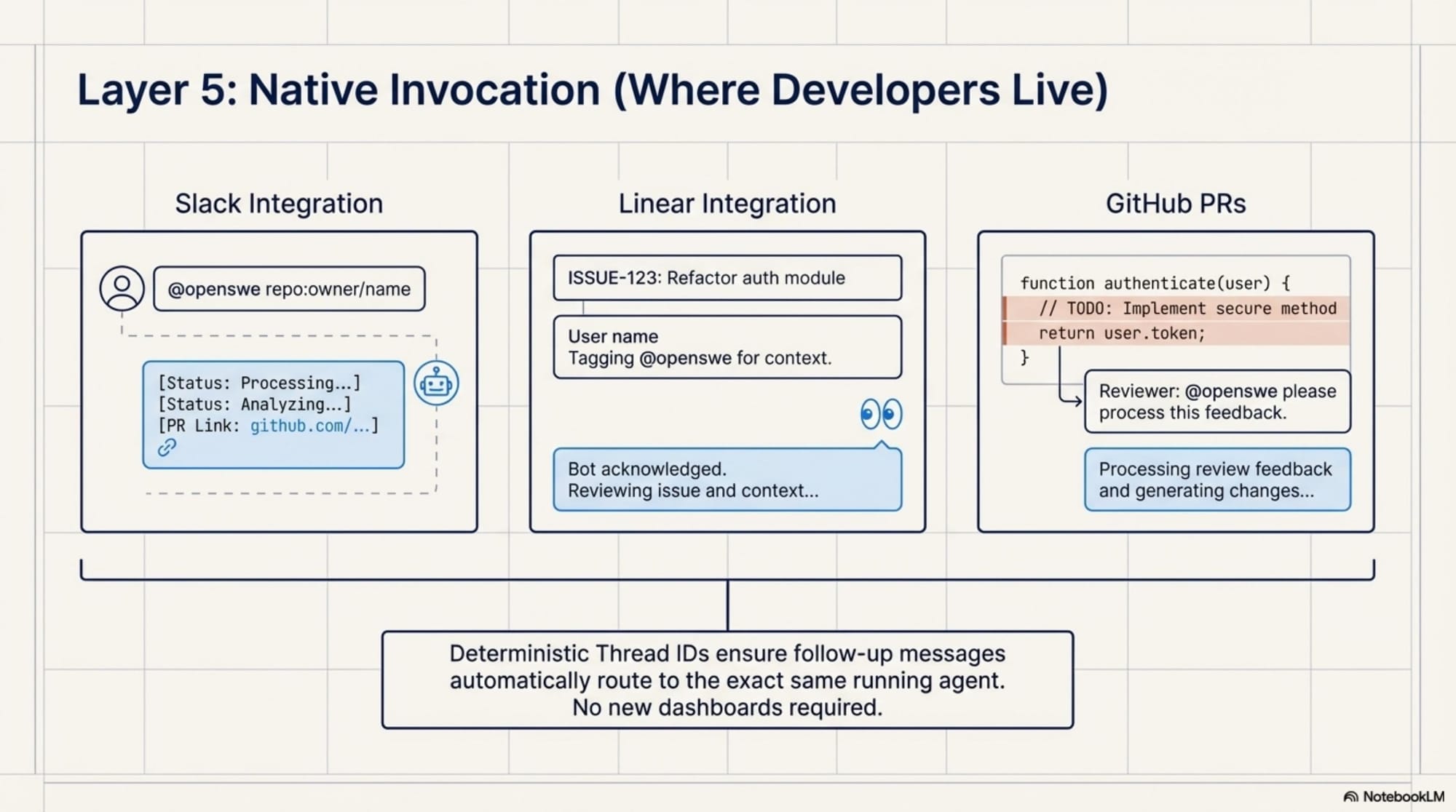

While Stripe's agents have access to roughly 500 tools, the key takeaway is that these tools are rigorously curated and maintained rather than blindly accumulated. Curation is far more important than sheer volume.Slack-First Invocation

To avoid forcing developers to context-switch into new applications, all three systems use Slack as their primary interface, integrating directly into existing communication workflows.Rich Starting Context

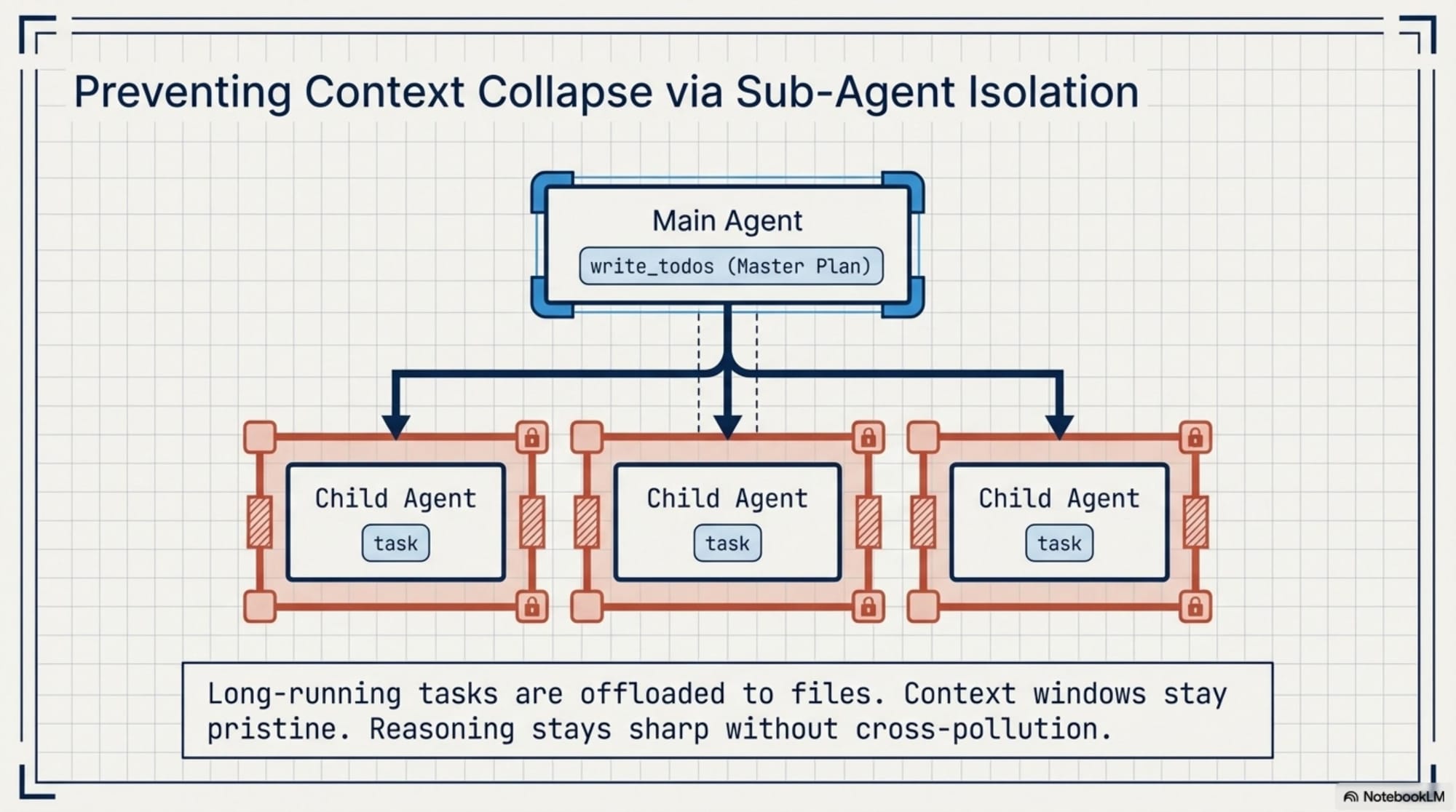

Instead of forcing the agent to waste time discovering requirements through tool calls, these systems pull extensive initial context from Linear issues, Slack threads, and GitHub PRs before the agent even starts.Sub-Agent Orchestration

Complex tasks are broken down and delegated to specialized child agents, each possessing its own isolated context and focused responsibilities.---

Key Architecture Components

Open SWE operationalizes these patterns using a robust, highly modular architecture built on top of Deep Agents and LangGraph.

Deep Agents Harness

Rather than building an agent from the ground up, Open SWE composes over the Deep Agents framework. This provides powerful built-in infrastructure:- Context Management: It offloads large amounts of intermediate data (like search results or file contents) into file-based memory, preventing context overflow in massive codebases. - Planning Primitives: It includes built-in tools like `write_todos` to structure multi-step tasks and adapt plans as new information emerges.

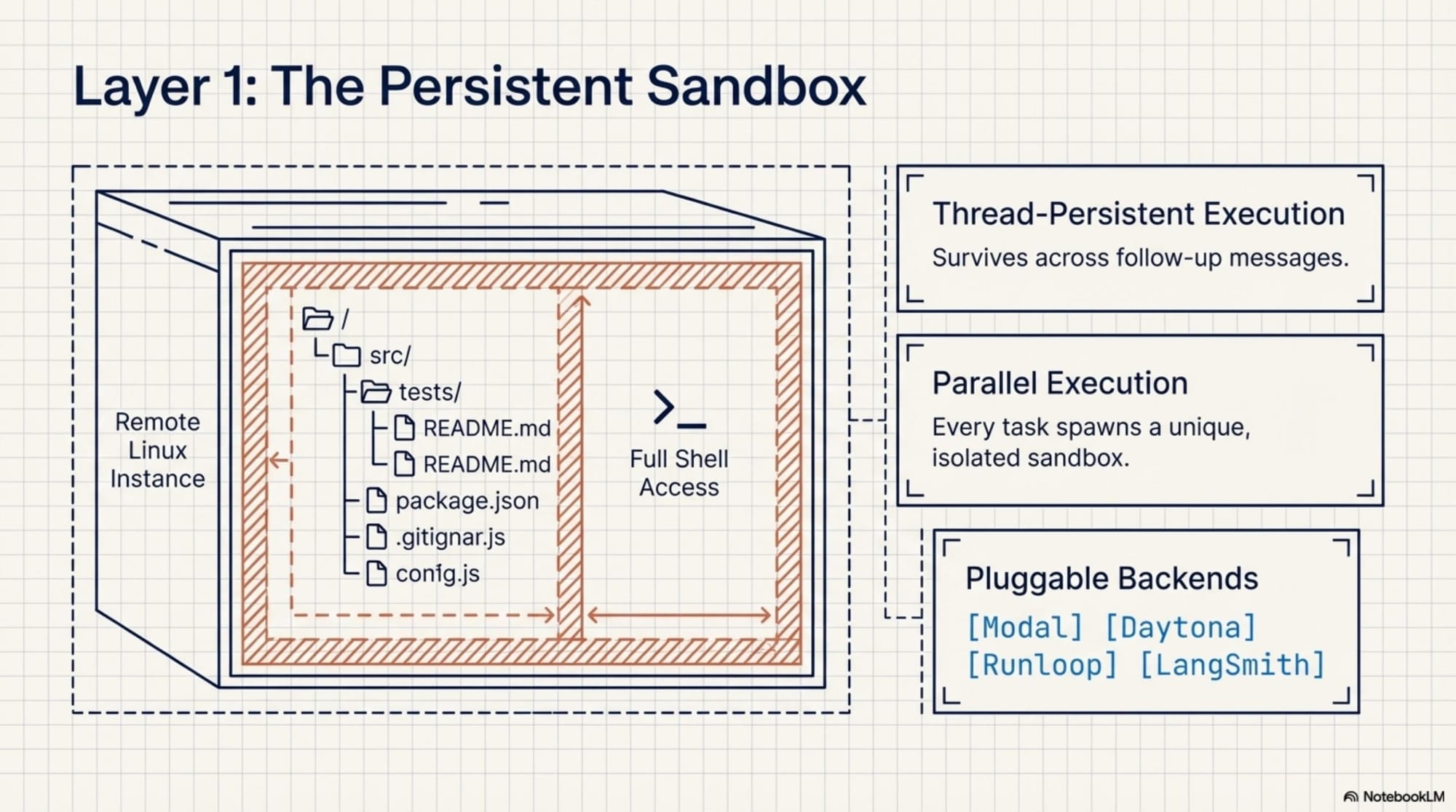

Isolated Cloud Sandboxes

Each conversational thread is assigned a persistent, remote Linux environment. When a task begins, the repository is cloned, and the agent is granted full permissions within that isolated space. Multiple tasks can execute in parallel, and if a sandbox becomes unreachable, the framework automatically regenerates it.Curated Tools & Context Engineering

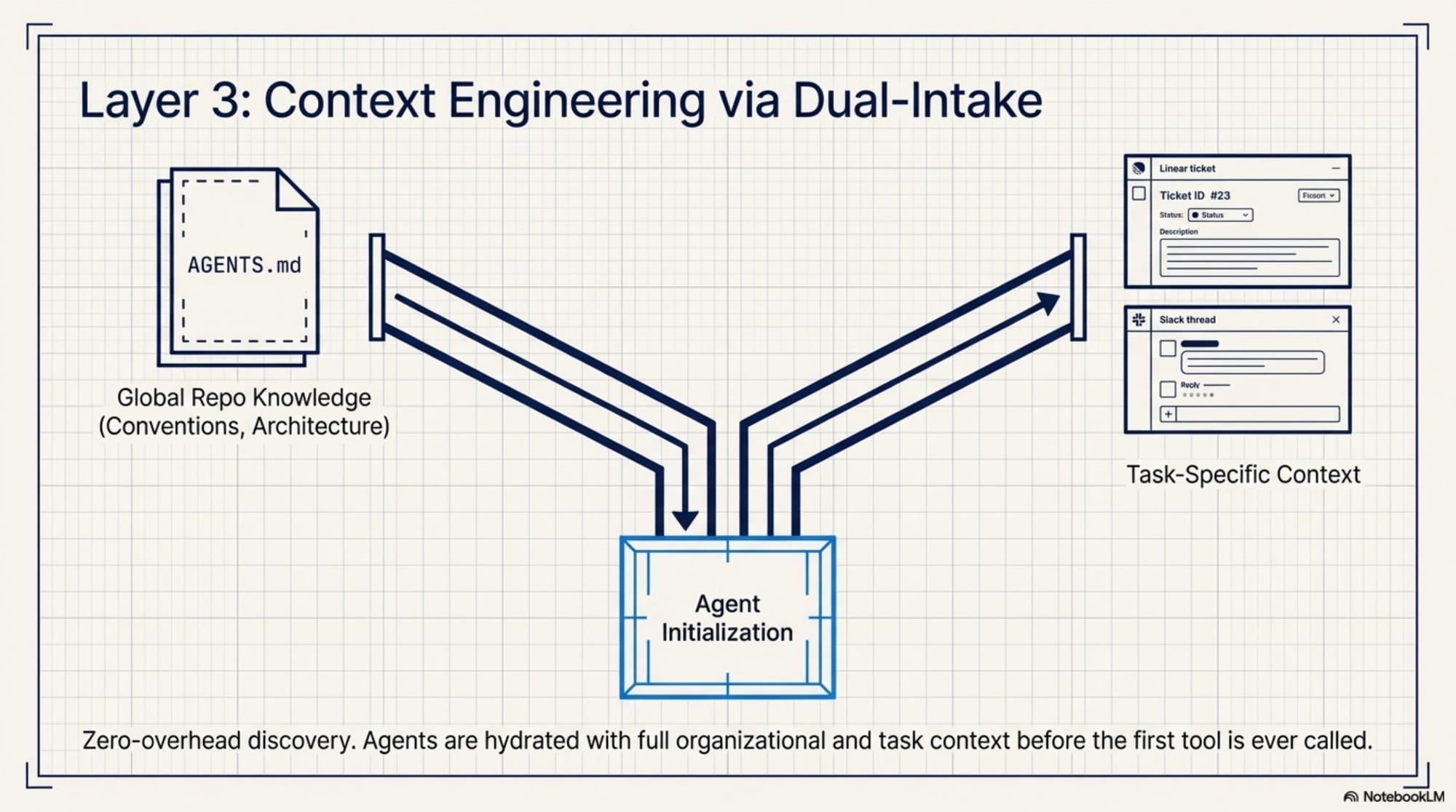

Open SWE ships with a focused set of built-in tools (e.g., `read_file`, `write_file`, `grep`, `task`). Context is managed through a powerful dual-layer approach:1. Repository Knowledge: An `AGENTS.md` file located at the repository root encodes team-specific conventions, architecture decisions, and testing requirements, which are injected into the system prompt. 2. Task-Specific Context: Information from Linear issues or Slack threads is aggregated to give the agent exactly what it needs for the task at hand.

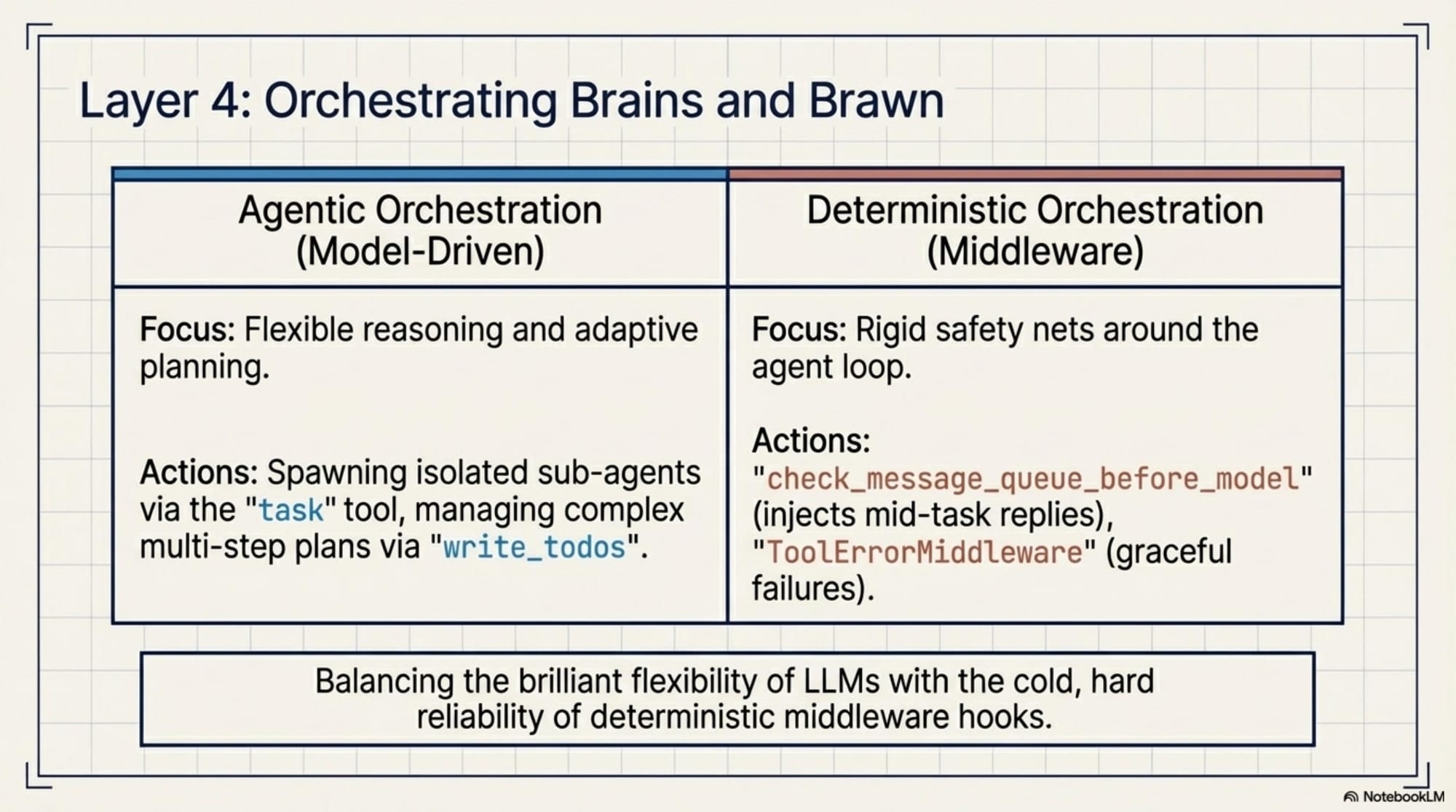

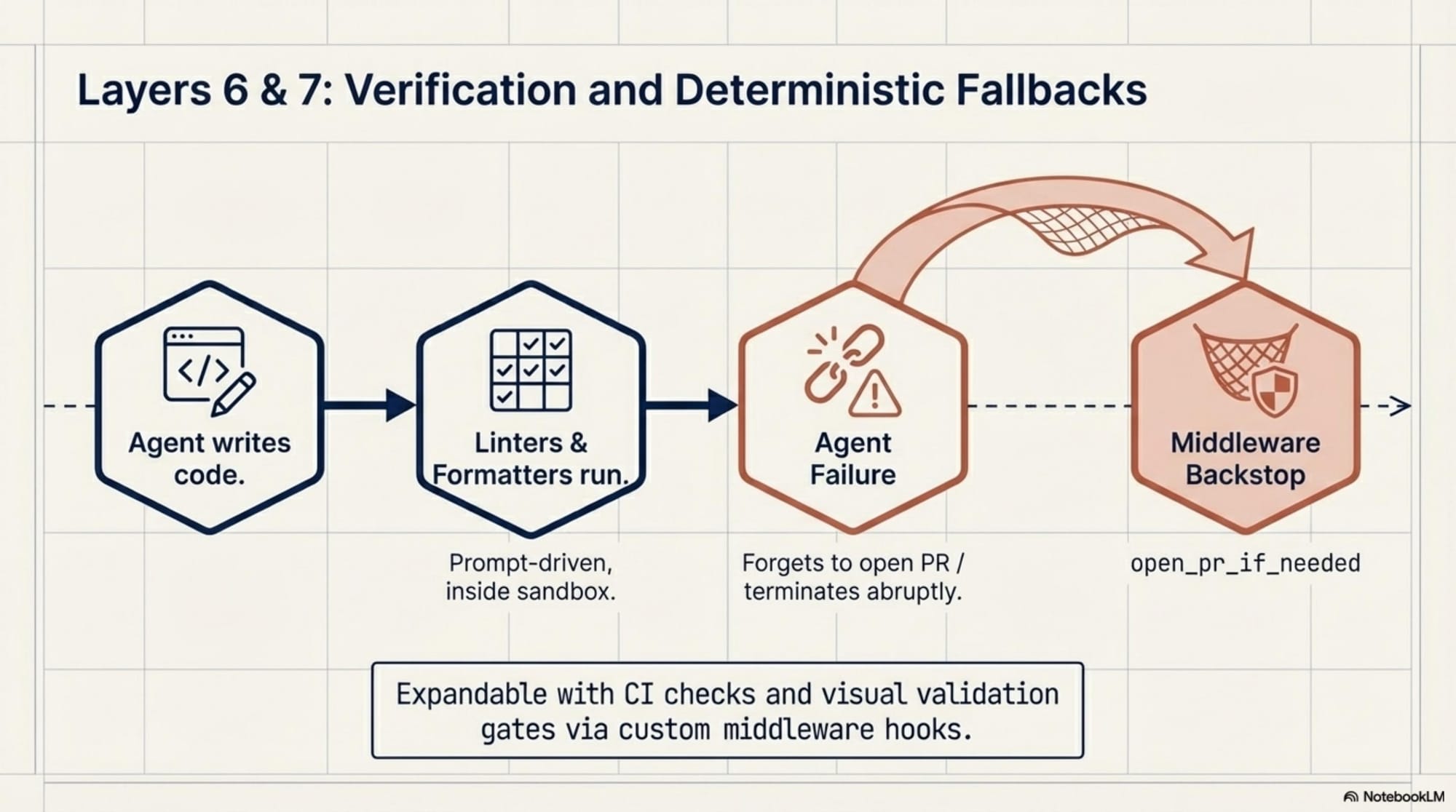

Agentic & Deterministic Orchestration

The framework balances AI decision-making with rock-solid reliability using sub-agents and middleware hooks:- The main agent can spawn child agents for isolated sub-tasks using the `task` tool. - Deterministic middleware wraps the agent loop. For example, `check_message_queue_before_model` injects late-arriving Slack or Linear comments mid-execution, and `open_pr_if_needed` acts as a safety net to automatically generate a commit and PR if the AI fails to do so before exiting.

---

How Teams Can Build Internal Coding Agents

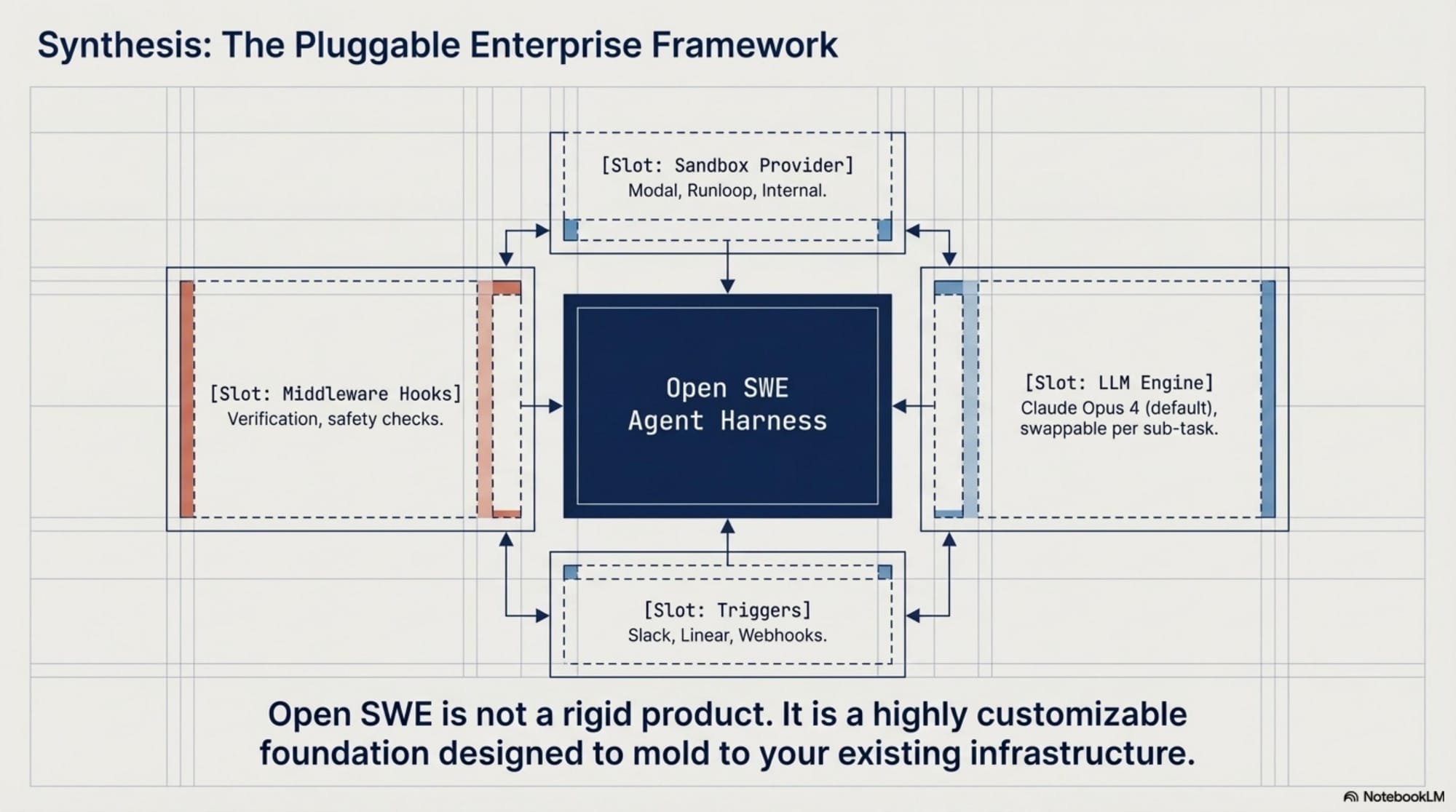

Open SWE is built to be a customizable foundation, not a rigid, finished product. Because every major component is pluggable, engineering teams can tailor it to their specific internal infrastructure:

Integrate Custom Sandboxes

While Open SWE natively supports providers like Modal, Daytona, Runloop, and LangSmith, teams can easily implement their own backend for internal infrastructure requirements.Bring Your Own Models & Tools

You can utilize any LLM provider (defaulting to Claude Opus 4) and configure different models for specific sub-tasks. Crucially, you can explicitly add internal APIs, custom deployment triggers, and testing frameworks to the agent's toolset.Configure Workflows & Triggers

Out of the box, the framework supports Slack (mentioning the bot with `repo:owner/name`), Linear (commenting `@openswe`), and GitHub (tagging the bot in PR reviews). Teams can write custom middleware to add verification steps, visual checks, or required review gates before code is committed.---

Getting Started

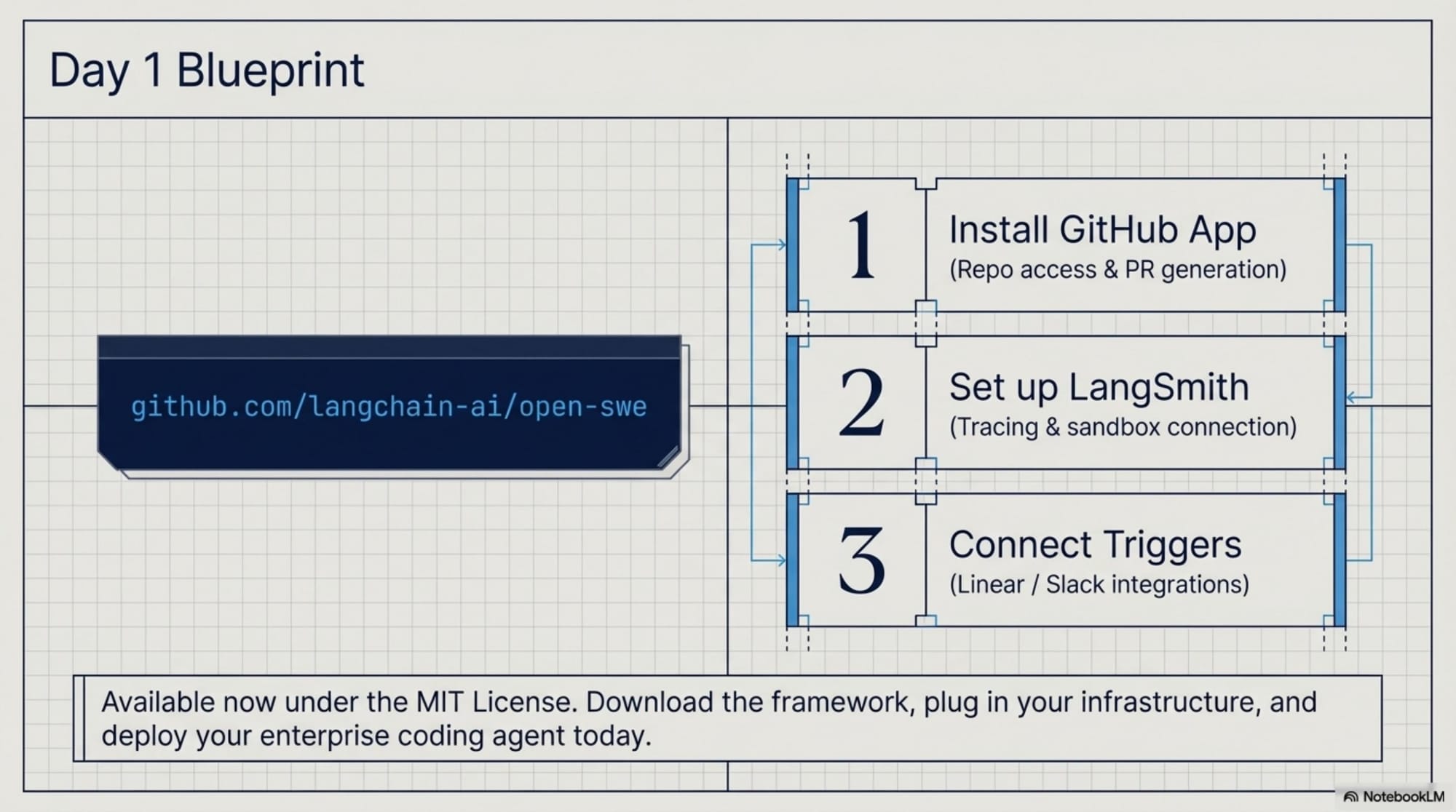

Actionable Insight for Deployment: Start small. You can find the Open SWE framework on GitHub (`langchain-ai/open-swe`). Follow the installation guide to set up the GitHub App and LangSmith tracking, and focus initially on defining a robust `AGENTS.md` file for your most active repository. By keeping your toolset strictly curated and leveraging the native Slack integration, you can quickly provide your engineering team with a powerful, context-aware coding assistant that fits seamlessly into their daily workflow.---

Key Takeaways

- Production-tested patterns: Open SWE distills learnings from Stripe, Ramp, and Coinbase into a reusable framework - Composition over forking: Upgrade the core while keeping your customizations - Isolated sandboxes: Safe execution with full permissions within boundaries - Curated tools: Quality over quantity in tool selection - Dual-layer context: Repository knowledge + task-specific context - Deterministic safety nets: Middleware ensures reliable operations

The era of building internal coding agents from scratch is over. Open SWE provides the blueprint.

📄 Briefing Doc: Technical Analysis

📄 Open SWE Framework (Technical Deep Dive)

# Technical Briefing: Open SWE Framework

Executive Summary

Open SWE is an open-source framework (MIT license) for building internal AI coding agents, based on architectural patterns independently developed by Stripe, Ramp, and Coinbase. Built on Deep Agents and LangGraph, it provides a production-tested starting point for teams implementing internal coding agents.---

Architecture Overview

Core Stack

- Base Framework: Deep Agents + LangGraph - Language: Python - Sandbox Providers: Modal, Daytona, Runloop, LangSmith (pluggable) - Default LLM: Claude Opus 4 (configurable)Key Components

#### 1. Agent Harness (Deep Agents) - Composition-based architecture (not forking) - File-based context management - Built-in planning via `write_todos` - Native sub-agent spawning via `task` tool - Middleware hooks for deterministic orchestration

#### 2. Sandboxed Execution - Isolated cloud Linux environments per conversation thread - Persistent sandboxes reused across messages - Auto-regeneration on sandbox unavailability - Parallel task execution across multiple sandboxes - Full shell access within strict boundaries

#### 3. Tool System Built-in tools: - `read_file`, `write_file`, `edit_file` - `ls`, `glob`, `grep` - `write_todos` (task planning) - `task` (sub-agent spawning)

Custom tools can be added for: - Internal APIs - Custom deployment systems - Specialized testing frameworks - Monitoring platforms

#### 4. Context Engineering

Repository Context (AGENTS.md) - Placed at repository root - Encodes team conventions, testing requirements, architecture decisions - Injected into system prompt Task-Specific Context - Linear issues (title, description, comments) - Slack thread history - GitHub PR context - Aggregated before agent starts#### 5. Orchestration Mechanisms

Sub-Agents - Spawned via `task` tool - Isolated context per sub-agent - Prevents conversation history pollution - Clearer reasoning on complex tasks Middleware Hooks - `check_message_queue_before_model`: Injects late-arriving messages - `open_pr_if_needed`: Auto-commit and PR creation safety net - `ToolErrorMiddleware`: Graceful error handling - Custom middleware for CI checks, visual validation, review gates#### 6. Invocation Interfaces

Slack - Mention bot in thread - Use `repo:owner/name` syntax to specify repository - Agent responds with status updates and PR links in thread Linear - Comment `@openswe` on issue - Agent reads full issue context - Responds with 👀 reaction and results as comment GitHub - Tag `@openswe` in PR comments - Agent handles review feedback - Pushes changes to same branch Thread Routing - Deterministic thread ID generation - Subsequent messages route to same running agent instance---

Production Patterns (from Stripe, Ramp, Coinbase)

Convergent Architecture Decisions

Despite independent development, these companies arrived at similar patterns:

1. Isolated Execution: Cloud sandboxes with full permissions within boundaries 2. Curated Toolsets: ~500 tools at Stripe, but carefully selected and maintained 3. Slack Integration: Primary interface to avoid context switching 4. Rich Starting Context: Pull from Linear, Slack, GitHub before starting 5. Sub-Agent Decomposition: Complex tasks broken into isolated child agents

Critical Insights

- Curation > Quantity: Focus on tool quality, not count - Context Pre-loading: Reduce discovery overhead by providing context upfront - Deterministic Safety Nets: Middleware ensures reliability without restricting AI flexibility - Workflow Integration: Meet developers where they already work (Slack)

---

Customization Points

All major components are pluggable:

| Component | Options | Custom Implementation | |-----------|---------|----------------------| | Sandbox | Modal, Daytona, Runloop, LangSmith | Yes | | Model | Any LLM provider | Yes | | Tools | Built-in set + custom additions | Yes | | Triggers | Slack, Linear, GitHub | Yes (email, webhooks, custom UI) | | System Prompts | Default + AGENTS.md | Yes | | Middleware | Built-in hooks | Yes (custom validation, gates) |

---

Getting Started

Prerequisites

- GitHub App setup - LangSmith configuration - Sandbox provider account (Modal, Daytona, etc.) - Slack/Linear/GitHub integrations (optional)Installation

```bash # Clone repository git clone https://github.com/langchain-ai/open-swe.git cd open-swe# Follow INSTALLATION.md for: # - GitHub App creation # - LangSmith setup # - Trigger configuration # - Production deployment ```

First Steps

1. Create `AGENTS.md` in your most active repository 2. Define team conventions, testing requirements, architecture decisions 3. Start with curated toolset (add internal tools gradually) 4. Test Slack integration in development channel 5. Iterate on system prompts based on team feedback---

Security Considerations

- Sandboxes isolate execution but have full permissions within boundaries - Review all tools added to agent's toolset - Implement approval gates via custom middleware for sensitive operations - Monitor agent actions via LangSmith tracing - Regular security audits of `AGENTS.md` and system prompts

---

Comparison with Internal Implementations

| Aspect | Stripe Minions | Ramp Inspect | Coinbase Cloudbot | Open SWE | |--------|---------------|--------------|-------------------|----------| | Core Patterns | ✅ Similar | ✅ Similar | ✅ Similar | ✅ Same | | Implementation | Proprietary | Proprietary | Proprietary | Open Source | | Customization | Fork required | Fork required | Fork required | Config-based | | Upgrade Path | Manual | Manual | Manual | Automatic | | Tool Integration | Internal APIs | Internal APIs | Internal APIs | Pluggable |

Key Difference: Open SWE provides the architectural blueprint without requiring teams to rebuild from scratch or fork existing agents.---

Resources

- GitHub: https://github.com/langchain-ai/open-swe - Installation Guide: `INSTALLATION.md` - Customization Guide: `CUSTOMIZATION.md` - License: MIT

Sources

1. GeekNews Article: "Open SWE: 사내 코딩 에이전트를 위한 오픈소스 프레임워크" - https://news.hada.io/topic?id=27604 2. Open SWE GitHub Repository - https://github.com/langchain-ai/open-swe 3. LangChain Tweet Announcement - https://x.com/LangChain/status/2033959303766512006

🔗 References

Primary Sources

- GeekNews — Open SWE: 사내 코딩 에이전트를 위한 오픈소스 프레임워크

- LangChain — Open SWE Announcement on X

Company Case Studies

- Stripe — Minions: Stripe's One-Shot End-to-End Coding Agents

- Ramp — How Ramp Built a Full-Context Background Coding Agent on Modal

- Coinbase — Building Enterprise AI Agents at Coinbase

Related Technologies

- Deep Agents — GitHub Repository

- LangGraph — Documentation

This analysis was synthesized from industry reports, corporate engineering blogs, and open-source documentation. All company-specific information is based on publicly available resources.